Early Fusion of Visual and Ingredient Representations for Multimodal Food Classification

DOI:

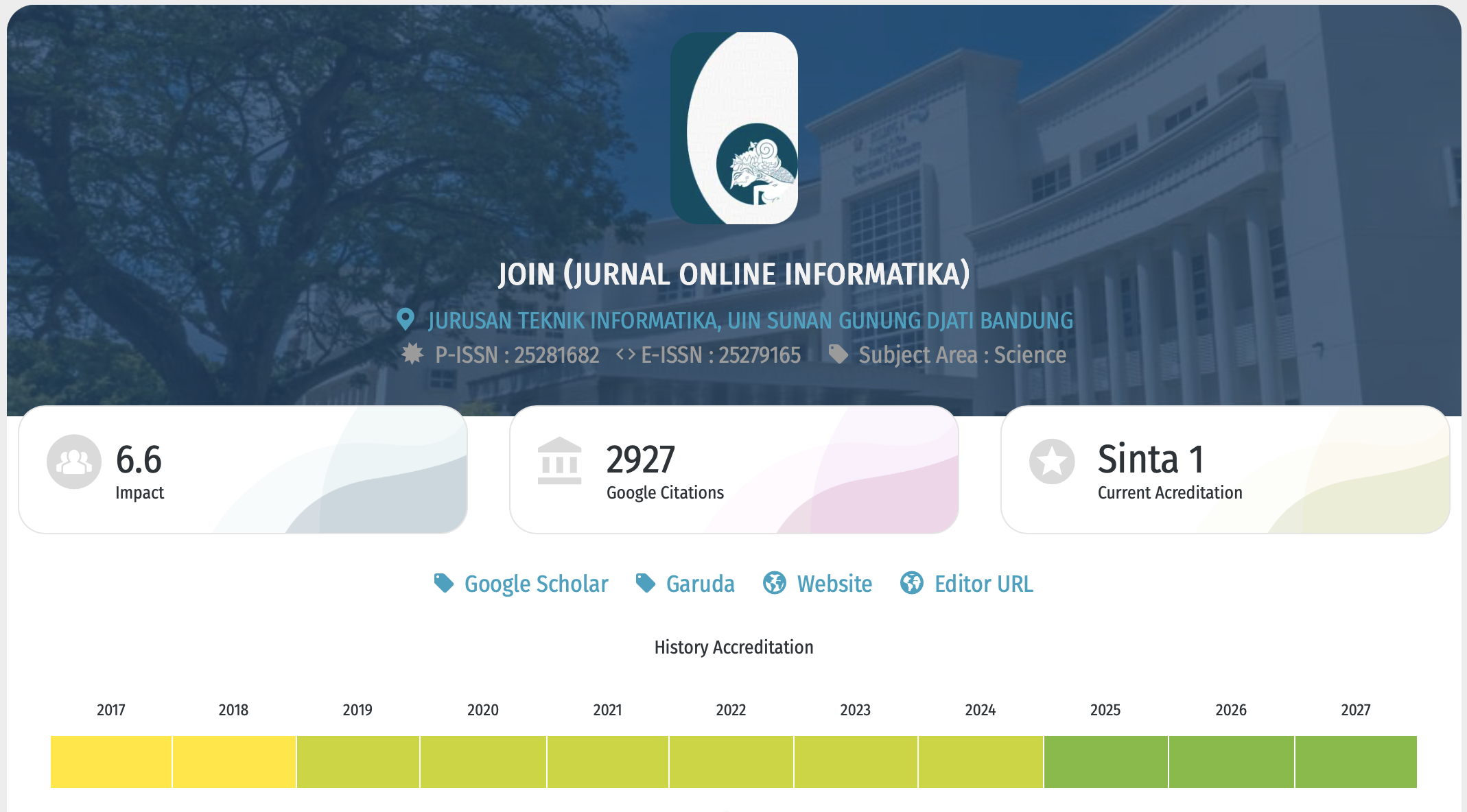

https://doi.org/10.15575/join.v11i1.1725Keywords:

Artificial Intelligence, CLIP, Early Fusion, Food Recognition, Multimodal ClassificationAbstract

References

[1] M. Ashraf et al., “Improved Ingredients-based Recipe Recommendation Software using Machine Learning,” in 2023 Eleventh International Conference on Intelligent Computing and Information Systems (ICICIS), Nov. 2023, pp. 509–514. doi: 10.1109/ICICIS58388.2023.10391164.

[2] D. Noever and S. E. M. Noever, “The Multimodal And Modular Ai Chef: Complex Recipe Generation From Imagery,” arXiv Prepr. arXiv2304.02016, 2023, doi: 10.48550/arXiv.2304.02016.

[3] A. Saklani, S. Tiwari, and H. S. Pannu, “Deep attentive multimodal learning for food information enhancement via early-stage heterogeneous fusion,” Vis. Comput., vol. 41, no. 4, pp. 2461–2476, Mar. 2025, doi: 10.1007/s00371-024-03546-5.

[4] I. Gallo, G. Ria, N. Landro, and R. La Grassa, “Image and Text fusion for UPMC Food-101 using BERT and CNNs,” in 2020 35th International Conference on Image and Vision Computing New Zealand (IVCNZ), Nov. 2020, pp. 1–6. doi: 10.1109/IVCNZ51579.2020.9290622.

[5] S.-Y. Lin, Y.-C. Chen, Y.-H. Chang, S.-H. Lo, and K.-M. Chao, “Text–image multimodal fusion model for enhanced fake news detection,” Sci. Prog., vol. 107, no. 4, Oct. 2024, doi: 10.1177/00368504241292685.

[6] A. Radford et al., “Learning Transferable Visual Models From Natural Language Supervision,” in Proceedings of Machine Learning Research, 2021, vol. 139, pp. 8748–8763.

[7] B. Arpit, K. Kumar, and S. Singla, “Multimodal Deep Learning: Integrating Text and Image Embeddings with Attention Mechanism,” in 2024 3rd International Conference on Artificial Intelligence For Internet of Things (AIIoT), May 2024, pp. 1–6. doi: 10.1109/AIIoT58432.2024.10574665.

[8] J. Guo, Y. Li, G. Cheng, and W. Li, “Based-CLIP early fusion transformer for image caption,” Signal, Image Video Process., vol. 19, no. 2, p. 112, Feb. 2025, doi: 10.1007/s11760-024-03721-0.

[9] F. Wang et al., “MuMIC - Multimodal Embedding for Multi-Label Image Classification with Tempered Sigmoid,” Proc. 37th AAAI Conf. Artif. Intell. AAAI 2023, vol. 37, pp. 15603–15611, 2023, doi: 10.1609/aaai.v37i13.26850.

[10] J.-H. Kim, N.-H. Kim, D. Jo, and C. S. Won, “Multimodal Food Image Classification with Large Language Models,” Electronics, vol. 13, no. 22, p. 4552, Nov. 2024, doi: 10.3390/electronics13224552.

[11] C. Jia et al., “Scaling Up Visual and Vision-Language Representation Learning With Noisy Text Supervision,” Proc. Mach. Learn. Res., vol. 139, pp. 4904–4916, 2021.

[12] S.-T. Cheng, Y.-J. Lyu, and C. Teng, “Image-Based Nutritional Advisory System: Employing Multimodal Deep Learning for Food Classification and Nutritional Analysis,” Appl. Sci., vol. 15, no. 9, p. 4911, Apr. 2025, doi: 10.3390/app15094911.

[13] Y. Setiawan, M. H. Z. Al Faroby, M. N. P. Ma’ady, I. M. W. A. Sanjaya, and C. V. C. Ramadhani, “Modality-based Modeling with Data Balancing and Dimensionality Reduction for Early Stunting Detection,” J. Online Inform., vol. 10, pp. 53–65, Apr. 2025, doi: 10.15575/join.v10i1.1495.

[14] R. Ismail and Z. Yuan, “Food ingredients recognition through multi-label learning,” Embed. Artif. Intell. Devices, Embed. Syst. Ind. Appl., pp. 130–141, 2022, doi: 10.1201/9781003394440-10.

[15] G. Kwon and J. C. Ye, “CLIPstyler: Image Style Transfer with a Single Text Condition,” in Proceedings of the IEEE Computer Society Conference on Computer Vision and Pattern Recognition, 2022, vol. 2022-June, pp. 18041–18050. doi: 10.1109/CVPR52688.2022.01753.

[16] E. Kim, K. Shim, S. Chang, and S. Yoon, “Semantic Token Reweighting for Interpretable and Controllable Text Embeddings in CLIP,” EMNLP 2024 - 2024 Conf. Empir. Methods Nat. Lang. Process. Find. EMNLP 2024, pp. 14330–14345, 2024, doi: 10.48550/arXiv.2410.08469.

[17] I. Najdenkoska, M. M. Derakhshani, Y. M. Asano, N. van Noord, M. Worring, and C. G. M. Snoek, “TULIP: Token-length Upgraded CLIP,” pp. 1–24, 2025, [Online]. Available: http://arxiv.org/abs/2410.10034

[18] M. Mohammadi, M. Eftekhari, and A. Hassani, “Image-Text Connection: Exploring the Expansion of the Diversity Within Joint Feature Space Similarity Scores,” IEEE Access, vol. 11, pp. 123209–123222, 2023, doi: 10.1109/ACCESS.2023.3327339.

[19] A. F. Rahmaniati and F. Utaminingrum, “Deep Learning Based Smart Wheelchair Navigation Optimization for Multi-Lighting Conditions,” in 2024 4th International Conference on Robotics, Automation, and Artificial Intelligence, RAAI 2024, 2024, pp. 295–300. doi: 10.1109/RAAI64504.2024.10949523.

[20] B. H. Prasetio, E. R. Widasari, and F. A. Bachtiar, “A Study of Machine Learning Based Stressed Speech Recognition System,” Int. J. Intell. Eng. Syst., vol. 15, no. 4, pp. 31–42, 2022, doi: 10.22266/ijies2022.0831.04.

[21] A. Munthuli et al., “Redefining the Classification of Extravasation Severity Using CLIP Linear Probe with Few-shot Instances,” 2024. doi: 10.1109/EMBC53108.2024.10782522.

[22] A. Septiarini, A. Sunyoto, H. Hamdani, A. A. Kasim, F. Utaminingrum, and H. R. Hatta, “Machine vision for the maturity classification of oil palm fresh fruit bunches based on color and texture features,” Sci. Hortic. (Amsterdam)., vol. 286, Aug. 2021, doi: 10.1016/j.scienta.2021.110245.

[23] T. Jiao, C. Guo, X. Feng, Y. Chen, and J. Song, “A Comprehensive Survey on Deep Learning Multi-Modal Fusion: Methods, Technologies and Applications,” Computers, Materials and Continua, vol. 80. Tech Science Press, pp. 1–35, 2024. doi: 10.32604/cmc.2024.053204.

[24] X. V. Lin et al., “MoMa: Efficient Early-Fusion Pre-training with Mixture of Modality-Aware Experts,” Jul. 2024, doi: 10.48550/arXiv.2407.21770.

[25] S. O. Ngesthi and L. A. Wulandhari, “Cassava Diseases Classification using EfficientNet Model with Imbalance Data Handling,” J. Online Inform., vol. 9, no. 2, pp. 148–158, Aug. 2024, doi: 10.15575/join.v9i2.1300.

[26] S. H. Shabiyya, B. H. Prasetio, and E. R. Widasari, “Harnessing the Power of CNN-Transformer Encoders in Stress Speech Analysis,” in Proceeding - International Conference on Information Technology and Computing 2023, ICITCOM 2023, 2023, pp. 147–151. doi: 10.1109/ICITCOM60176.2023.10442454.

Downloads

Published

Issue

Section

Citation Check

License

Copyright (c) 2026 Navira Rahma Salsabila, Adela Regita Azzahra, Fitri Utaminingrum, Barlian Henryranu Prasetio

This work is licensed under a Creative Commons Attribution-NoDerivatives 4.0 International License.

You are free to:

- Share — copy and redistribute the material in any medium or format for any purpose, even commercially.

- The licensor cannot revoke these freedoms as long as you follow the license terms.

Under the following terms:

-

Attribution — You must give appropriate credit, provide a link to the license, and indicate if changes were made. You may do so in any reasonable manner, but not in any way that suggests the licensor endorses you or your use.

-

NoDerivatives — If you remix, transform, or build upon the material, you may not distribute the modified material.

-

No additional restrictions — You may not apply legal terms or technological measures that legally restrict others from doing anything the license permits.

Notices:

- You do not have to comply with the license for elements of the material in the public domain or where your use is permitted by an applicable exception or limitation.

- No warranties are given. The license may not give you all of the permissions necessary for your intended use. For example, other rights such as publicity, privacy, or moral rights may limit how you use the material.

This work is licensed under a Creative Commons Attribution-NoDerivatives 4.0 International License