Scalability Testing of Land Forest Fire Patrol Information Systems

DOI:

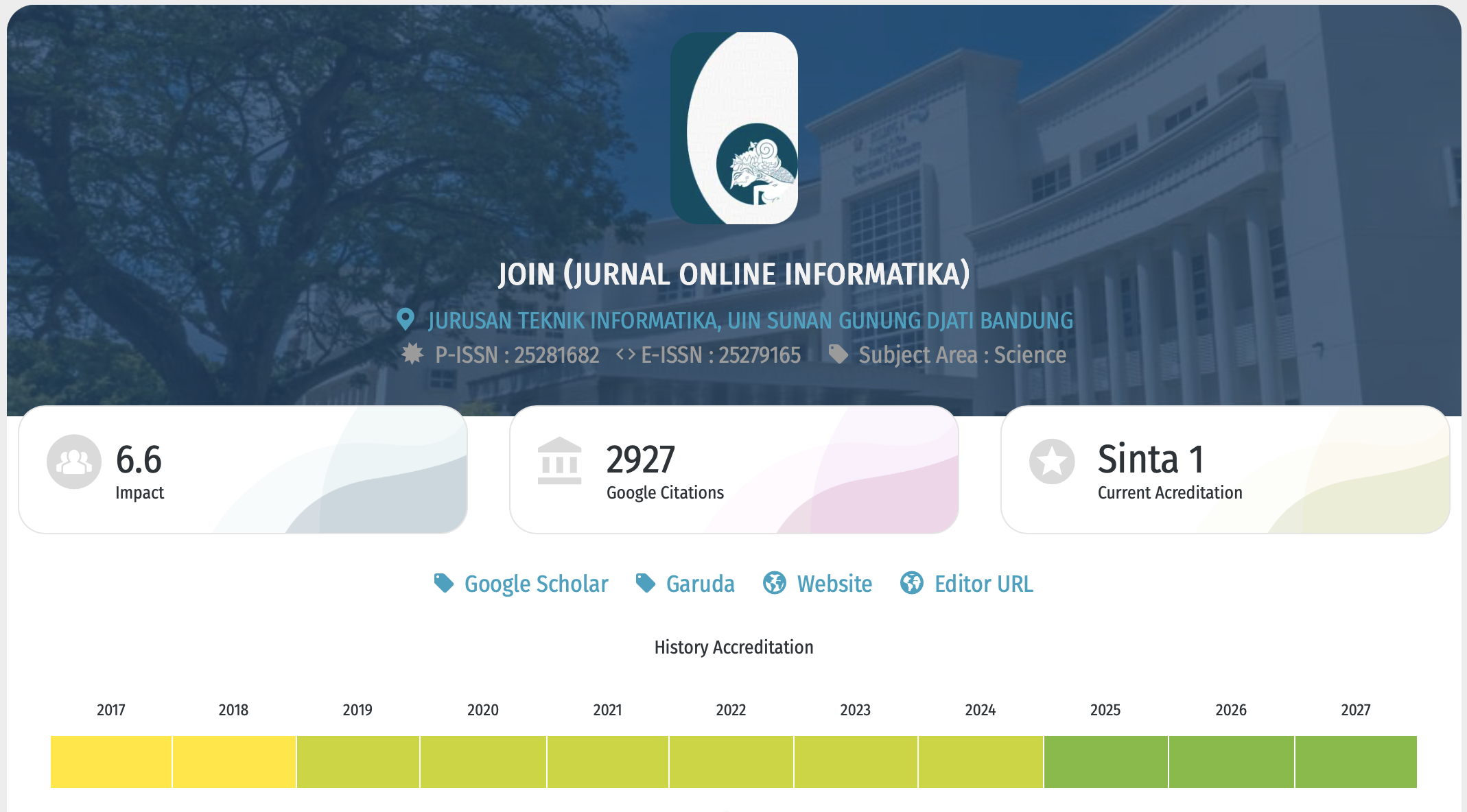

https://doi.org/10.15575/join.v8i1.977Keywords:

Fires, Scalability, Software, TestingAbstract

References

Kemenlhk, “Hutan dan Deforestasi Indonesia Tahun 2019,” Kemenlhk, 2020. http://ppid.menlhk.go.id/siaran_pers/browse/2435.

R. B. Edwards, R. L. Naylor, M. M. Higgins, and W. P. Falcon, “Causes of Indonesia’s forest fires,” World Dev., vol. 127, 2020, doi: 10.1016/j.worlddev.2019.104717.

I. S. Sitanggang, R. Trisminingsih, and Wulandari, “Panduan Penggunaan SIPP Karhutla,” 2021.

D. Ramdhany, I. S. Sitanggang, I. Kurniawan, and Wulandari, “Modul Front-End Sistem Informasi Geospasial Patroli Terpadu Kebakaran,” J. Resti (Rekayasa Sist. dan Teknol. Informasi), vol. 5, no. 2, pp. 272–280, 2021.

D. Kumar and K. K. Mishra, “The Impacts of Test Automation on Software’s Cost, Quality and Time to Market,” Procedia Comput. Sci., vol. 79, pp. 8–15, 2016, doi: 10.1016/j.procs.2016.03.003.

F. Okezie, I. Odun-Ayo, and S. Bogle, “A Critical Analysis of Software Testing Tools,” J. Phys. Conf. Ser., vol. 1378, no. 4, 2019, doi: 10.1088/1742-6596/1378/4/042030.

J. Larsson, M. Borg, and T. Olsson, “Testing quality requirements of a system-of-systems in the public sector - Challenges and potential remedies,” CEUR Workshop Proc., vol. 1564, 2016.

D. Iskandar and N. Nofiyati, “Performa Testing untuk mengetahui reabilitas QoS (Quality of Service) website Fakultas Teknik Universitas Jenderal Soedirman,” Din. Rekayasa, vol. 14, no. 1, p. 39, 2018, doi: 10.20884/1.dr.2018.14.1.206.

A. C. Fathiyah, S. F. S. Gumilang, and D. Witarsyah, “PENGUJIAN FUNGSIONAL DAN NON FUNGSIONAL APLIKASI WEB BORONGAJAYUK,” vol. 44, no. 12, pp. 2–8, 2019.

R. Abbas, Z. Sultan, and S. N. Bhatti, “Comparative analysis of automated load testing tools: Apache JMeter, Microsoft Visual Studio (TFS), LoadRunner, Siege,” Int. Conf. Commun. Technol. ComTech 2017, vol. 1, no. 2, pp. 39–44, 2017, doi: 10.1109/COMTECH.2017.8065747.

A. K. Chandrasekhar and D. A. S.?; Chandran, “Comparative Analysis of Load Testing Tools Sahi And Selenium,” Int. J. Creat. Res. Thoughts, vol. 5, no. 7, pp. 55–60, 2016.

Z. M. Jiang and A. E. Hassan, “A Survey on Load Testing of Large-Scale Software Systems,” IEEE Trans. Softw. Eng., vol. 41, no. 11, pp. 1091–1118, 2015, doi: 10.1109/TSE.2015.2445340.

S. Suryadevara and S. Ali, “Preperformance Testing of A Website,” pp. 33–52, 2020, doi: 10.5121/csit.2020.100703.

N. Husufa and I. Prihandi, “Optimizing JMeter on Performance Testing Using the Bulk Data Method,” J. Inf. Syst. Informatics, vol. 4, no. 2, pp. 205–215, 2022, doi: 10.51519/journalisi.v4i2.244.

S. Sharmila and E. Ramadevi, “Analysis of Performance Testing on Web Applications,” Int. J. Adv. Reserach Comput. Commun. Eng., vol. 3, no. 3, pp. 2021–2278, 2014.

H. M. AlGhamdi, C. P. Bezemer, W. Shang, A. E. Hassan, and P. Flora, “Towards reducing the time needed for load testing,” J. Softw. Evol. Process, pp. 1–18, 2020, doi: 10.1002/smr.2276.

A. Kumar and M. N. Yarlagadda, “Performance, Scalability, and Reliability (PSR) challenges, metrics and tools for web testing: A Case Study,” 2016, [Online]. Available: www.bth.se.

Downloads

Published

Issue

Section

Citation Check

License

Copyright (c) 2023 Jurnal Online Informatika

This work is licensed under a Creative Commons Attribution-NoDerivatives 4.0 International License.

You are free to:

- Share — copy and redistribute the material in any medium or format for any purpose, even commercially.

- The licensor cannot revoke these freedoms as long as you follow the license terms.

Under the following terms:

-

Attribution — You must give appropriate credit, provide a link to the license, and indicate if changes were made. You may do so in any reasonable manner, but not in any way that suggests the licensor endorses you or your use.

-

NoDerivatives — If you remix, transform, or build upon the material, you may not distribute the modified material.

-

No additional restrictions — You may not apply legal terms or technological measures that legally restrict others from doing anything the license permits.

Notices:

- You do not have to comply with the license for elements of the material in the public domain or where your use is permitted by an applicable exception or limitation.

- No warranties are given. The license may not give you all of the permissions necessary for your intended use. For example, other rights such as publicity, privacy, or moral rights may limit how you use the material.

This work is licensed under a Creative Commons Attribution-NoDerivatives 4.0 International License