Maleo-Short: An "In-the-Wild" Indonesian Dataset for Speaker Diarization

DOI:

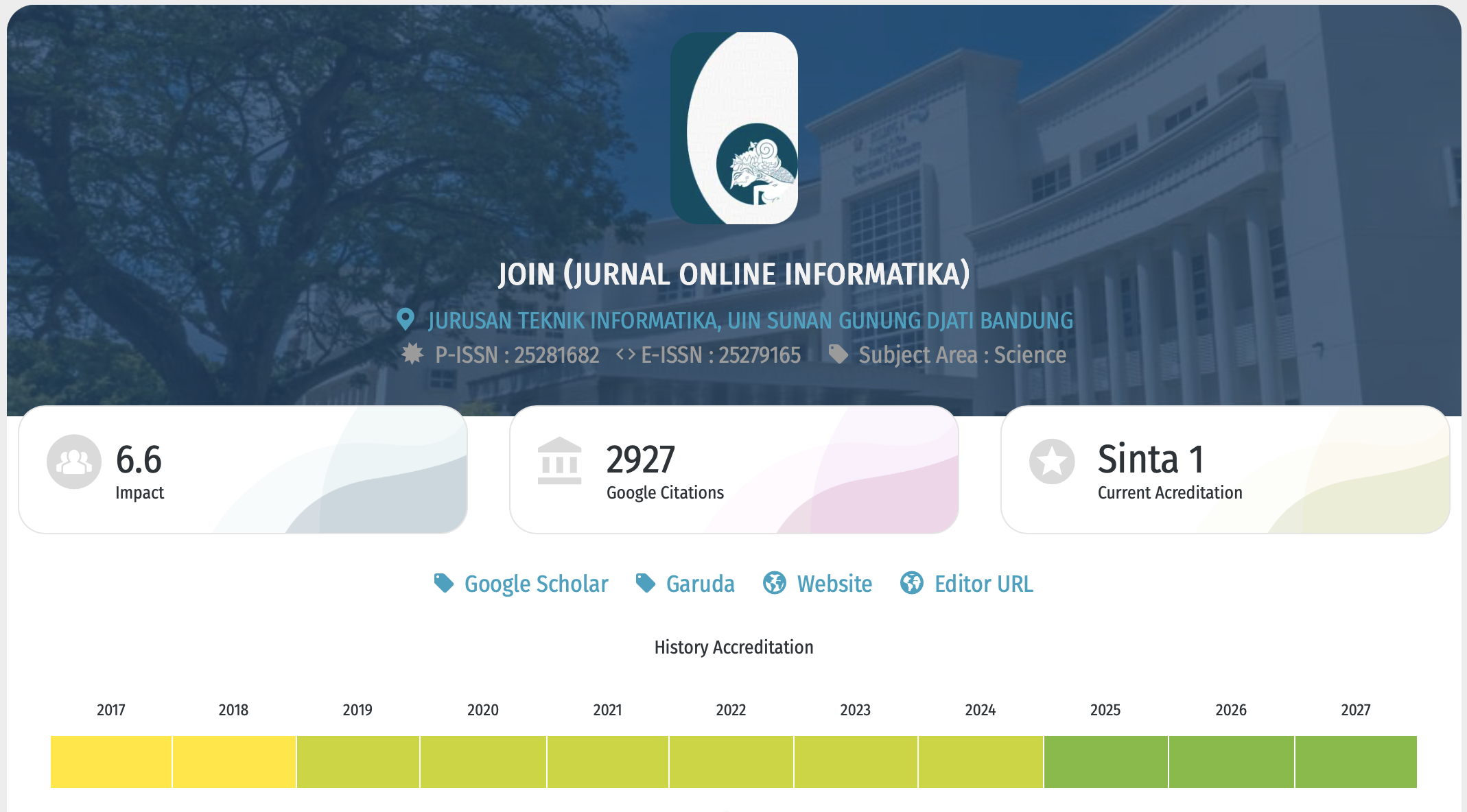

https://doi.org/10.15575/join.v11i1.1781Keywords:

Indonesian, In-the-wild Speech, Speech Dataset , Speaker DiarizationAbstract

References

[1] T. J. Park, N. Kanda, D. Dimitriadis, K. J. Han, S. Watanabe, and S. Narayanan, “A review of speaker diarization: Recent advances with deep learning,” Comput Speech Lang, vol. 72, p. 101317, Mar. 2022, doi: 10.1016/j.csl.2021.101317.

[2] K. Kumar, “Speaker Diarization: A Review,” INTERANTIONAL JOURNAL OF SCIENTIFIC RESEARCH IN ENGINEERING AND MANAGEMENT, vol. 07, no. 06, Jun. 2023, doi: 10.55041/IJSREM24075.

[3] D. Soesanto, B. Hartanto, and Melisa, “Meeting Assistant System Berbasis Teknologi Speech-to-Text,” Teknika, vol. 10, no. 1, pp. 1–7, Jan. 2021, doi: 10.34148/teknika.v10i1.307.

[4] J. S. Chung, J. Huh, A. Nagrani, T. Afouras, and A. Zisserman, “Spot the Conversation: Speaker Diarisation in the Wild,” in Interspeech 2020, ISCA: ISCA, Oct. 2020, pp. 299–303. doi: 10.21437/Interspeech.2020-2337.

[5] H. Wijaya, “Teknologi Pengenalan Suara tentang Metode, Bahasa dan Tantangan: Systematic Literature Review,” bit-Tech, vol. 7, no. 2, pp. 533–544, Dec. 2024, doi: 10.32877/bt.v7i2.1888.

[6] J. Tian et al., “The Royalflush Automatic Speech Diarization and Recognition System for In-Car Multi-Channel Automatic Speech Recognition Challenge,” in 2024 IEEE International Conference on Acoustics, Speech, and Signal Processing Workshops (ICASSPW), IEEE, Apr. 2024, pp. 1–2. doi: 10.1109/ICASSPW62465.2024.10626136.

[7] N. Ryanta et al., “Enhancement and Analysis of Conversational Speech: JSALT 2017,” in 2018 IEEE International Conference on Acoustics, Speech and Signal Processing (ICASSP), IEEE, Apr. 2018, pp. 5154–5158. doi: 10.1109/ICASSP.2018.8462468.

[8] E. Z. Xu, Z. Song, S. Tsutsui, C. Feng, M. Ye, and M. Z. Shou, “AVA-AVD: Audio-visual Speaker Diarization in the Wild,” in Proceedings of the 30th ACM International Conference on Multimedia, New York, NY, USA: ACM, Oct. 2022, pp. 3838–3847. doi: 10.1145/3503161.3548027.

[9] K. Kinoshita, M. Delcroix, and N. Tawara, “Advances in Integration of End-to-End Neural and Clustering-Based Diarization for Real Conversational Speech,” in Interspeech 2021, ISCA: ISCA, Aug. 2021, pp. 3565–3569. doi: 10.21437/Interspeech.2021-1004.

[10] A. Janin et al., “The ICSI meeting project: Resources and research,” in Proceedings of the 2004 ICASSP NIST Meeting Recognition Workshop, 2004.

[11] D. Mostefa et al., “The CHIL audiovisual corpus for lecture and meeting analysis inside smart rooms,” Lang Resour Eval, vol. 41, no. 3–4, pp. 389–407, Dec. 2007, doi: 10.1007/s10579-007-9054-4.

[12] W. Kraaij, T. Hain, M. Lincoln, and W. Post, “The AMI meeting corpus,” in Proc. International Conference on Methods and Techniques in Behavioral Research, 2005, pp. 1–4.

[13] S. Watanabe et al., “CHiME-6 Challenge: Tackling Multispeaker Speech Recognition for Unsegmented Recordings,” in 6th International Workshop on Speech Processing in Everyday Environments (CHiME 2020), ISCA: ISCA, May 2020, pp. 1–7. doi: 10.21437/CHiME.2020-1.

[14] T. Liu et al., “MSDWild: Multi-modal Speaker Diarization Dataset in the Wild,” in Interspeech 2022, ISCA: ISCA, Sep. 2022, pp. 1476–1480. doi: 10.21437/Interspeech.2022-10466.

[15] Z. Yang et al., “Open Source MagicData-RAMC: A Rich Annotated Mandarin Conversational(RAMC) Speech Dataset,” in Interspeech 2022, ISCA: ISCA, Sep. 2022, pp. 1736–1740. doi: 10.21437/Interspeech.2022-729.

[16] F. Yu et al., “M2MeT: The ICASSP 2022 Multi-Channel Multi-Party Meeting Transcription Challenge,” in ICASSP 2022 - 2022 IEEE International Conference on Acoustics, Speech and Signal Processing (ICASSP), IEEE, May 2022, pp. 6167–6171. doi: 10.1109/ICASSP43922.2022.9746465.

[17] Y. Fu et al., “AISHELL-4: An Open Source Dataset for Speech Enhancement, Separation, Recognition and Speaker Diarization in Conference Scenario,” in Interspeech 2021, ISCA: ISCA, Aug. 2021, pp. 3665–3669. doi: 10.21437/Interspeech.2021-1397.

[18] D. P. Lestari, K. Iwano, and S. Furui, “A Large Vocabulary Continuous Speech Recognition System for Indonesian Language,” in Book name 15th Indonesian Scientific Conference in Japan, Vol., No., 2006, pp. 17–22.

[19] U. Thayasivam, T. Gnanenthiram, S. Jeewantha, and U. Jayawickrama, “SiTa - Sinhala and Tamil Speaker Diarization Dataset in the Wild,” in Proceedings of the First Workshop on Challenges in Processing South Asian Languages (CHiPSAL 2025), K. Sarveswaran, A. Vaidya, B. Krishna Bal, S. Shams, and S. Thapa, Eds., Abu Dhabi, UAE: International Committee on Computational Linguistics, Jan. 2025, pp. 83–92. [Online]. Available: https://aclanthology.org/2025.chipsal-1.8/

[20] P. Wittenburg, H. Brugman, A. Russel, A. Klassmann, and H. Sloetjes, “ELAN: a Professional Framework for Multimodality Research,” in Proceedings of the Fifth International Conference on Language Resources and Evaluation (LREC’06), N. Calzolari, K. Choukri, A. Gangemi, B. Maegaard, J. Mariani, J. Odijk, and D. Tapias, Eds., Genoa, Italy: European Language Resources Association (ELRA), May 2006. [Online]. Available: https://aclanthology.org/L06-1082/

[21] H. Bredin et al., “Pyannote.Audio: Neural Building Blocks for Speaker Diarization,” in ICASSP 2020 - 2020 IEEE International Conference on Acoustics, Speech and Signal Processing (ICASSP), IEEE, May 2020, pp. 7124–7128. doi: 10.1109/ICASSP40776.2020.9052974.

[22] F. Landini, M. Diez, T. Stafylakis, and L. Burget, “DiaPer: End-to-End Neural Diarization With Perceiver-Based Attractors,” IEEE/ACM Trans Audio Speech Lang Process, vol. 32, pp. 3450–3465, 2024, doi: 10.1109/TASLP.2024.3422818.

[23] A. Radford, J. W. Kim, T. Xu, G. Brockman, C. McLeavey, and I. Sutskever, “Robust Speech Recognition via Large-Scale Weak Supervision,” in Proceedings of the 40th International Conference on Machine Learning, in ICML’23. JMLR.org, 2023.

[24] A. Baevski, H. Zhou, A. Mohamed, and M. Auli, “wav2vec 2.0: A Framework for Self-Supervised Learning of Speech Representations,” in Proceedings of the 34th International Conference on Neural Information Processing Systems, in NIPS ’20. Red Hook, NY, USA: Curran Associates Inc., 2020.

[25] A. C. Morris, V. Maier, and P. Green, “From WER and RIL to MER and WIL: improved evaluation measures for connected speech recognition,” in Interspeech 2004, ISCA: ISCA, Oct. 2004, pp. 2765–2768. doi: 10.21437/Interspeech.2004-668.

Downloads

Published

Issue

Section

Citation Check

License

Copyright (c) 2026 Ardi Mardiana, Dinda Desmonda Muslimah, Ade Bastian, Eka Tresna Irawan

This work is licensed under a Creative Commons Attribution-NoDerivatives 4.0 International License.

You are free to:

- Share — copy and redistribute the material in any medium or format for any purpose, even commercially.

- The licensor cannot revoke these freedoms as long as you follow the license terms.

Under the following terms:

-

Attribution — You must give appropriate credit, provide a link to the license, and indicate if changes were made. You may do so in any reasonable manner, but not in any way that suggests the licensor endorses you or your use.

-

NoDerivatives — If you remix, transform, or build upon the material, you may not distribute the modified material.

-

No additional restrictions — You may not apply legal terms or technological measures that legally restrict others from doing anything the license permits.

Notices:

- You do not have to comply with the license for elements of the material in the public domain or where your use is permitted by an applicable exception or limitation.

- No warranties are given. The license may not give you all of the permissions necessary for your intended use. For example, other rights such as publicity, privacy, or moral rights may limit how you use the material.

This work is licensed under a Creative Commons Attribution-NoDerivatives 4.0 International License