Data Balancing Techniques Using the PCA-KMeans and ADASYN for Possible Stroke Disease Cases

DOI:

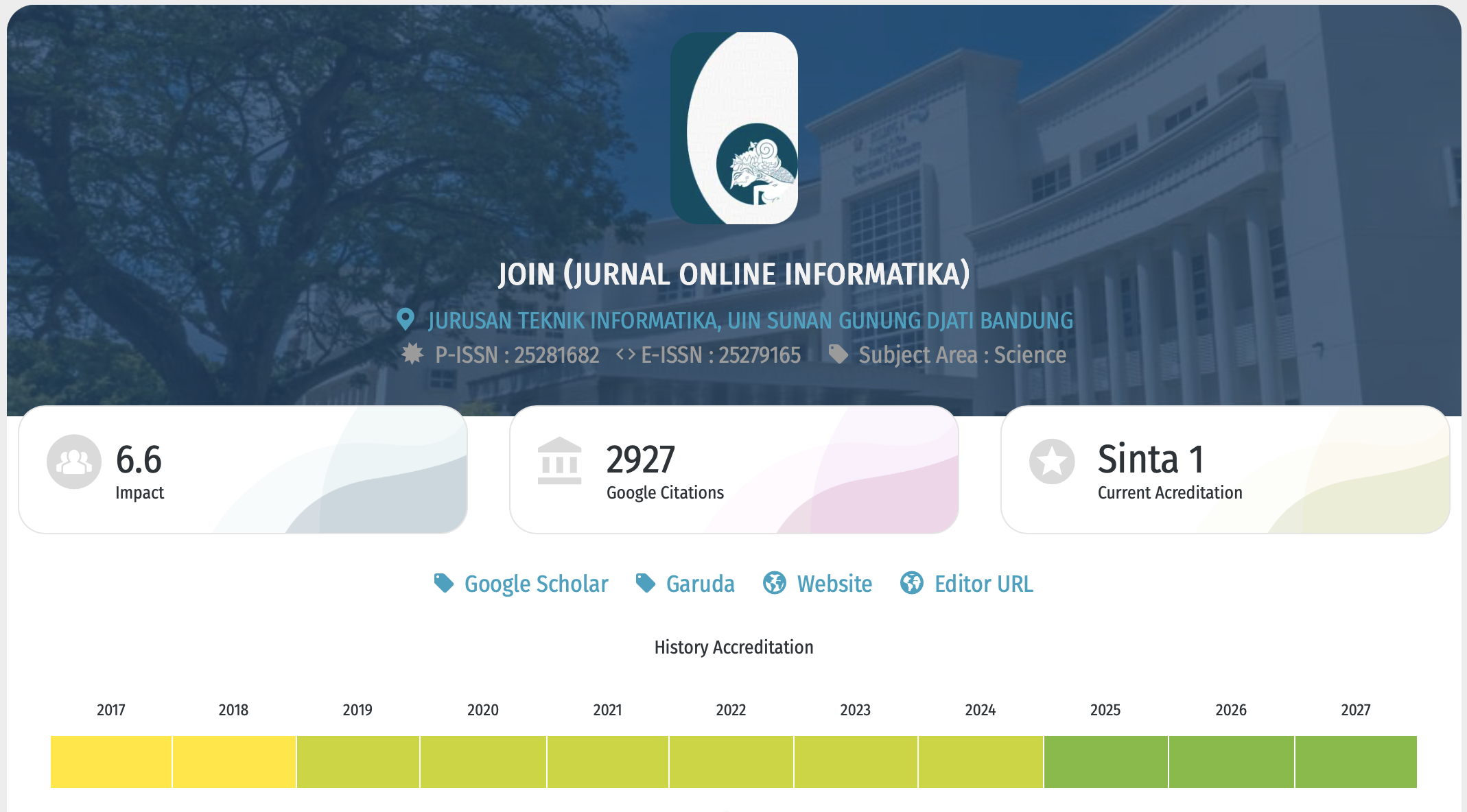

https://doi.org/10.15575/join.v9i1.1293Keywords:

ADASYN, Imbalanced Data, Machine Learning, PCA-KMeans, StrokeAbstract

References

M. Lutfi, A. T. Arsanto, M. F. Amrulloh, and U. Kulsum, “Penanganan Data Tidak Seimbang Menggunakan Hybrid Method Resampling Pada Algoritma Naive Bayes Untuk Software Defect Prediction,” INFORMAL Informatics J., vol. 8, no. 2, p. 119, 2023.

S. Mutmainah, “Penanganan Imbalance Data Pada Klasifikasi Kemungkinan Penyakit Stroke,” J. Sains, Nalar, dan Apl. Teknol. Inf., vol. 1, no. 1, pp. 10–16, 2021.

Google, “Imbalanced Data.” [Online]. Available: https://developers.google.com/machine-learning/data-prep/construct/sampling-splitting/imbalanced-data?hl=en. [Accessed: 22-Apr-2024].

K. Pykes, “Oversampling and Undersampling,” 2020. [Online]. Available: https://towardsdatascience.com/oversampling-and-undersampling-5e2bbaf56dcf. [Accessed: 22-Apr-2024].

T. Y. Lin, P. Goyal, R. Girshick, K. He, and P. Dollar, “Focal Loss for Dense Object Detection,” IEEE Trans. Pattern Anal. Mach. Intell., vol. 42, no. 2, pp. 318–327, 2020.

Y. Jing, “Machine Learning Performance Analysis to Predict Stroke Based on Imbalanced Medical Dataset,” CAIBDA 2022-2nd Int. Conf. Artif. Intell. Big Data Algorithms, pp. 462–468, 2022.

N. G. Ramadhan, “Comparative Analysis of ADASYN-SVM and SMOTE-SVM Methods on the Detection of Type 2 Diabetes Mellitus,” Sci. J. Informatics, vol. 8, no. 2, pp. 276–282, 2021.

C. Ding, “K -means Clustering via Principal Component Analysis,” 2004.

H. He, Y. Bai, E. A. Garcia, and S. Li, “ADASYN: Adaptive synthetic sampling approach for imbalanced learning,” Proc. Int. Jt. Conf. Neural Networks, no. March, pp. 1322–1328, 2008.

D. Yadav, “Categorical encoding using Label-Encoding and One-Hot-Encoder,” 2019. [Online]. Available: https://towardsdatascience.com/categorical-encoding-using-label-encoding-and-one-hot-encoder-911ef77fb5bd. [Accessed: 22-Apr-2024].

C. GOYAL, “Outlier Detection & Removal | How to Detect & Remove Outliers,” 2024. [Online]. Available: https://www.analyticsvidhya.com/blog/2021/05/feature-engineering-how-to-detect-and-remove-outliers-with- python-code/. [Accessed: 22-Apr-2024].

N. Sharma, “Ways to Detect and Remove the Outliers,” 2018. [Online]. Available: https://towardsdatascience.com/ways-to-detect-and-remove-the-outliers-404d16608dba. [Accessed: 22-Apr-2024].

N. Tamboli, “Effective Strategies for Handling Missing Values in Data Analysis,” 2023. [Online]. Available: https://www.analyticsvidhya.com/blog/2021/10/handling-missing-value/. [Accessed: 22-Apr-2024].

Google, “Normalization,” 2024. [Online]. Available: https://developers.google.com/machine-learning/data- prep/transform/normalization. [Accessed: 22-Apr-2024].

Kaggle, “Stroke Prediction Dataset,” 2023. [Online]. Available: https://www.kaggle.com/datasets/fedesoriano/stroke- prediction-dataset. [Accessed: 24-Apr-1BC].

I. Dabbura, “K-means Clustering: Algorithm, Applications, Evaluation Methods, and Drawbacks,” 2018. [Online]. Available: https://towardsdatascience.com/k-means-clustering-algorithm-applications-evaluation-methods-and- drawbacks-aa03e644b48a. [Accessed: 24-Apr-1BC].

E. Ecosystem, “Understanding K-means Clustering in Machine Learning,” 2018. [Online]. Available: https://towardsdatascience.com/understanding-k-means-clustering-in-machine-learning-6a6e67336aa1. [Accessed: 24-Apr-1BC].

E. Kaloyanova, “How to Combine PCA and K-means Clustering in Python?,” 2024. [Online]. Available: https://365datascience-com.translate.goog/tutorials/python-tutorials/pca-k- means/?_x_tr_sl=en&_x_tr_tl=id&_x_tr_hl=id&_x_tr_pto=tc&_x_tr_hist=true. [Accessed: 24-Apr-1BC].

D. V. Ramadhanti, R. Santoso, and T. Widiharih, “Perbandingan Smote Dan Adasyn Pada Data Imbalance Untuk Klasifikasi Rumah Tangga Miskin Di Kabupaten Temanggung Dengan Algoritma K-Nearest Neighbor,” J. Gaussian, vol. 11, no. 4, pp. 499–505, 2023.

S. Rahayu, “Analisis Perbandingan Metode Over-Sampling Adaptive ( ADSYN-kNN ) untuk Data dengan Fitur Nominal-Multi Categories,” Citee, pp. 296–300, 2017.

B. K, “Introduction to Deep Neural Networks,” 2023. [Online]. Available: https://www.datacamp.com/tutorial/introduction-to-deep-neural-networks. [Accessed: 24-Apr-1BC].

Binus University, “Mengenal 3 Jenis Neural Network Pada Deep Learning,” 22AD. [Online]. Available: https://sis.binus.ac.id/2022/04/21/mengenal-3-jenis-neural-network-pada-deep-learning. [Accessed: 24-Apr-2024].

M. Rouse, “Input Layer,” 2018. [Online]. Available: https://www.techopedia.com/definition/33262/input-layer-neural- networks. [Accessed: 22-Apr-2024].

P. Antoniadis, “Hidden Layers in a Neural Network,” 2024. [Online]. Available: https://www.baeldung.com/cs/hidden- layers-neural-network. [Accessed: 24-Apr-2024].

R. Fajri, “Neural Network: Algoritma yang Menjadi Inti dari ChatGPT,” 2023. [Online]. Available: https://www.dicoding.com/blog/neural-network-algoritma-yang-menjadi-inti-dari-chatgpt. [Accessed: 24-Apr-2024].

Downloads

Published

Issue

Section

Citation Check

License

Copyright (c) 2024 Uung Ungkawa, Muhammad Avilla Rafi

This work is licensed under a Creative Commons Attribution-NoDerivatives 4.0 International License.

You are free to:

- Share — copy and redistribute the material in any medium or format for any purpose, even commercially.

- The licensor cannot revoke these freedoms as long as you follow the license terms.

Under the following terms:

-

Attribution — You must give appropriate credit, provide a link to the license, and indicate if changes were made. You may do so in any reasonable manner, but not in any way that suggests the licensor endorses you or your use.

-

NoDerivatives — If you remix, transform, or build upon the material, you may not distribute the modified material.

-

No additional restrictions — You may not apply legal terms or technological measures that legally restrict others from doing anything the license permits.

Notices:

- You do not have to comply with the license for elements of the material in the public domain or where your use is permitted by an applicable exception or limitation.

- No warranties are given. The license may not give you all of the permissions necessary for your intended use. For example, other rights such as publicity, privacy, or moral rights may limit how you use the material.

This work is licensed under a Creative Commons Attribution-NoDerivatives 4.0 International License